And, unfortunately for us, they are really quite good at it.

Tigers can magically blend in with their surroundings, giving us only glimpses of their true form.

But there’s much more to fear from tigers than simply being eaten alive.

It has to do with how we see them while they’re hiding in the grass.

If you’ve attended any of my public talks in the past, chances are you’ve heard me speak about the lesson of the “Tiger in the Grass.” Those of you will probably recognize many of the things I mention in this post. But I’ve never actually written about this lesson before, and I want to make sure I expand on it in this blog for a wider audience. I believe it is a very helpful lesson for pioneers exploring new technologies and mediums of expression.

Meet the Brain. The most amazing Pattern Recognition system in the world.

Pattern Recognition is defined as “the act of taking in raw data and taking an action based on the category of the pattern.” Our human brain is really good at pattern recognition, and it does it all the time.

The reason we’re so good at it is pretty simple. It’s a huge evolutionary advantage. The faster we can figure out a situation, the more likely we will survive.

Here’s an example.

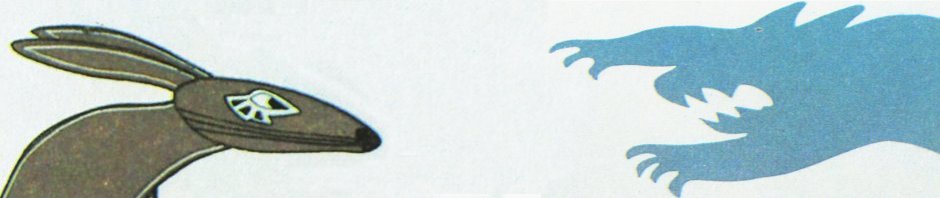

Ogg and Throgg are two cavemen. They are wandering in the grassy plains. In front of them is a tiger, hiding in the grass.

Because the tiger is very good at hiding, Ogg and Throgg can only see a few parts of the tiger. A single yellow eye peering out of the foliage. The white tip of a tufted tail twitching on the ground.

Ogg looks and thinks, “Hmmm. I think there’s an animal over there. But I can’t see enough of it to know what kind of animal.” So Ogg walks closer to collect more data.

Meanwhile, Throgg looks and thinks, “Uh oh. That yellow eye and tufted tail. What does that remind me of? ” Throgg mind takes the partial data and extrapolates. In his mind’s eye he now sees a tiger in full form, with sharp claws and pointy teeth. He flips out, his limbic system kicking into high gear, and runs away.

Ogg is lunch. Throgg survives. And Throgg’s children inherit a bit of his cleverness in tiger pattern recognition.

There are many other examples of how quick and efficient pattern recognition can improve basic human survival. Like recognizing healthy things to eat, finding good mates, and navigating hostile environments.

The world is full of partial data. And as human beings observing the world, we spend most of our time filling in the gaps.

We do this all the time. And not just with things that physically exist. We also do it with more abstract concepts.

Like language.

Here’s a fun example you try out for yourself. Try reading the following paragraph.

Aoccdrnig to rsecareh at Cmabrigde Uinervtisy, it deosn’t mttaer in waht oredr the ltteers in a wrod are, the olny iprmoetnt tihng is taht the frist and lsat ltteer be in the rghit pclae. The rset can be a tataol mses and you can sitll raed it wouthit a porbelm. Tihs is bcuseae the huamn mnid deos not raed ervey lteter by istlef, but the wrod as a wlohe.

Pretty amazing, isn’t it?

Now, here’s the danger. Our brain’s first instinct is to fill in the gaps and decide as quickly as possible, “What does this remind me of?” But it can often get things wrong.

Our brain can completely blow it when presented with something that is completely new but similar to something it knows very well.

Many species exploit this weakness through mimicry. Just present a few visual or behavioral cues that are similar to another species, and you have a great survival strategy.

There’s a deeper lesson in all this. Especially for pioneers exploring new technologies and new mediums of expression.

The telephone is not the telegraph. A movie is not a play. The web is not a brochure.

Human beings are constantly inventing new media and new tools for communication. And whenever something new pops up, because of our brain’s propensity for speedy pattern recognition, we instinctively think “What does this remind me of?”

And this gets us into real trouble. Because, once we treat a new thing as something we’re already familiar with, we lose out on the uniquely new possibilities of the new thing.

History is littered with these mistakes.

When the telephone was first invented, people used it like the telegraph to convey mostly business messages. “Hey, our business uses the telegraph all the time to send memos, and people can read memos out loud much faster!” The idea of the general population using the telephone as a way to have synchronous discussions and stay connected with family and friends was completely alien for many years.

When movies arrived on the scene, everyone noticed that the projected screen looked a lot like a blank set for a play. So filmmakers stuck a single camera on a pole in front of a stage and filmed plays. It took decades before pioneering directors realized they could create a rich new language of cinematography. Like filming scenes with multiple cameras at once, cutting and splicing the developed film to create different perspectives.

And most of you probably remember how the early days of the web were filled with sites that were little more than scanned brochures. “Oh look, you can arrange text and pictures on an electronic page that millions of people can view. Our brochure department will love this. And we’ll save fortune on printing costs!” The revolution of Web 2.0, businesses like Amazon, and collaboratively created sites like Wikipedia took many years to unfold.

The real danger of the Tiger in the Grass is not the tiger, but the nature of our own mind.

I’ve written a lot about how important it is to remember those things that make us most human. And how, by creating tools and technologies that both leverage and augment what makes us human, we can unlock completely unique possibilities.

But there’s a catch. Sometimes, the things that make us most human can get us into real trouble. Like our over-eager nature towards pattern recognition.

The trick is to be aware of our human qualities. And that includes being aware of how they can sometimes cause us to stumble.

So the next time you are looking at a brand new technology or tool, take a step back to ponder the truly unique qualities of it. Ignore what it reminds you of. Don’t use it in ways that feel comfortably familiar and merely optimize things you are already doing.

You’ll have to work really hard at this. Your brain’s instincts will be fighting against you all the way.

If you persevere, you’ll come face-to-face with a much more desirable human quality.

Innovation.

-John “Pathfinder” Lester

Pingback: Tweets that mention The danger in how we see | Be Cunning and Full of Tricks -- Topsy.com

Right after I posted this piece, I stumbled on this:

http://www.thewildernessdowntown.com/

An amazing example of how to use the web in a way unlike any other medium. Simply breathtaking.

Thanks for the link, that Arcade Fire site is amazing. Such an outstanding example of how strong memory can be and how personal the future of emergent/online media will be.

Spoiler Alert: I mean, who didn’t get goosebumps when they turned down your childhood street?? Fantastic.

We’re so good at pattern-recognition, sometimes we think we’re coming face-to-face with something that is not a face: pareidolia.

That reminds me of an experiment we did in an art class when I was a kid.

One person was told to draw a big squiggly circle. Any kind. A second person was told to add a small regular circle inside the squiggly one, close to but not touching the inside edge. Finally, the picture was given to a third person, who was told to identify what it was.

Invariably, the picture was identified as a profile of a weird face.

But wait a second, now it is the attacking alpaca which is more dangerous then if all us Throgg’s are watching for the tiger?

Great points. A related source of self-deception is the tendency to generalize from a sample of one — in this case to assume that the way we use a particular technological tool or device is the way everyone does. As someone who spends a lot of time looking at data, I am continually reminded of how much variation there is in attitudes and behaviors related to almost everything and how my own personal attitudes and behaviors are almost never representative of anything.

And so a virtual world is not a …………….

Good question. 🙂 And I deliberately didn’t post my many thoughts on that question when I wrote this article. Felt it would be more interesting to have everyone’s ideas surface (mine included) in a discussion.

I think a big part of the challenge in answering that question is the fact that virtual worlds are many things. They are also full of metaphor, and some of the metaphors are helpful while others are pretty detrimental.

For example, in SL many people run “clubs.” The owners of these clubs have built places for people to gather, socialize and dance. These clubs often feature live music (both live performances and live DJs).

Club owners build these places in ways that reflect real world clubs. Dance floors, booths, stages for performances, and even bars with barstools. So the “club metaphor” is very strong.

And in most ways, this metaphor holds up very successfully. If you watch how people behave in virtual clubs, it is almost identical to what you’d see in a real life club.

But there’s one area where the metaphor breaks down completely. And that’s in the business model for the virtual club owner.

In real life, club owners make most of their revenue by selling drinks to patrons. But avatars don’t spend money on virtual drinks.

You often see people in SL fully embracing the design of clubs in real life. And unfortunately they all eventually face the hard fact that the primary way to monetize patrons in real life simply doesn’t work in SL.

So, I’d start with: “Virtual worlds are very similar to many things in real life. But they are not completely those things. And in those differences you’ll find many practical challenges, such as how to create viable business models.”

Good question on a great post. I know I’ve brought Marshall McLuhan up here before, but I think after all these years he’s still at the cutting edge of thinking on this stuff. One of his ideas was that people tend to initially view and use any new technology in terms of the ones that came before it. And that it takes time for people to figure out how to take advantage of its potential. One example I remember is that filmmakers initially shot movies as if they were plays … a single continuous shot from a fixed camera position. Eventually, that limited vocabulary was extended to the fast moving “cuts” in distance, time and place that we’re used to today. I suspect that we’re only beginning to see that type of paradigm-shifting use of virtual worlds.

@joe

… game!

“Then I asked: `Does a firm persuasion that a thing is so, make it so?’ He replied: `All Poets believe that it does, and in ages of imagination this firm persuasion removed mountains; but many are not capable of a firm persuasion of anything.’”

William Blake (1757-1827)

by trying to pigeon hole 3d realtime immersive media into the term “virtual world” you end up with more failures than successes using the media. This is not new… but has been the repeated failed business plan/marketing plan of almost all realtime 3d media platforms/systems since the early/mid 90s when they reached a mass usable level.

VIrtual reality – the buzz term before “virtual worlds”- mainly because it was driven by the myths of needed HMDs ( tell that to the ID guys at the VR mekler show in NY 1992), while virtual worlds needed the myth of human shaped avatars( sl avatar id silliness)…. both memes failed to encompass the larger media capabilities and help keep fragmenting and cycling the adoption of the media.

Besides…every flash game from 1999 became a “virtual world” as it was remade in 2009 for facebook/gambling clicking.

yes. tha danger is how we fail to see…. but then again, weve created a generation that desires “glasses” and other tech augmentations…

slavery never had it so good:)

“by trying to pigeon hole 3d realtime immersive media into the term “virtual world” you end up with more failures than successes using the media”

I think you summed things up nicely, cube3.

My problem is that there is no succinct and commonly used term for “3d realtime immersive media,” so I find myself using the term “virtual world” even when it doesn’t fully fit.

Anyone have a better term?

like “nature” or “reality” its hard to find a single term that can be used for marketing the medium….. maybe one day, after wide adoption, itll be the like “TV” and have a name based on a device…..or software brand

secondlife was in a way “almost” a win in that way.. but they wont be around as a brand long enough to be there when it happens…

we dont call the internet AOL..:) though many think we may call it Google one day…

and of course any use of just “3d” gets coopted avery fwe years….

in 1995 it meant a rendering, in 2005 it meant flash vector rendering online, and today it means 3d “stereo” again as it did back in the 1950s..

and soon i suppose it may be back to flash3d again..unless unity does become the macromedia of the next 3 years…. waiting for embeded 3d in all browsers…..

and the seasons they go round and round…:)

Maybe we should make one up?

How about 3Dsim (for 3D synchronous immersive media)?

It’s short enough to stick it in front of specific forms of 3Dsim, so 3Dsim games, 3Dsim worlds, 3Dsim simulations…

Let’s imagine. Imagine that the Rome of A.D.320 has been rendered in exact detail in 3D models

http://www.romereborn.virginia.edu/gallery-current.php

Imagine it has been developed into a virtual environment accessible to explore concurrently with hundreds of like-minded individuals with one URL click and when you enter VOIP just works.

Imagine guided tours around the Pantheon and the Colisseum, live acts at the Circus Maximus and the Theatre of Pompey. Imagine museums within the Rome reconstruction exhibiting the artifacts which have survived from the era of the Roman Empire.

Just keep imagining for all we need is at hand except the one single item which is stubbornly withheld, the will to let it happen.

gibson on google….

Jeremy Bentham’s Panopticon prison design is a perennial metaphor in discussions of digital surveillance and data mining, but it doesn’t really suit an entity like Google. Bentham’s all-seeing eye looks down from a central viewpoint, the gaze of a Victorian warder. In Google, we are at once the surveilled and the individual retinal cells of the surveillant, however many millions of us, constantly if unconsciously participatory. We are part of a post-geographical, post-national super-state, one that handily says no to China. Or yes, depending on profit considerations and strategy. But we do not participate in Google on that level. We’re citizens, but without rights.

rome.. yeah woopie but were all be the slaves…..? SPQR was the virtual world game from the late 90s made by slave student labor at Columbia, by some greedy teachers..

do we really need this again?

learn some history… a now gibson finally gets it…. ZERO HISTORY….

well took him long enough…. :).. and mcluhan hasnt changed.. only those who can find his words online do:)

Pingback: 1-Bit Symphony and the Art of Constraint | Be Cunning and Full of Tricks

Pingback: Augmented Cities and Dreaming Wisely | Be Cunning and Full of Tricks

no to China. Or yes, dpedneing on profit considerations and strategy. But we do not participate in Google on that level. We’re citizens, but without rights. rome.. yeah woopie but were all be the slaves…..? SPQR was the virtual world game from the late 90s made by slave student labor at Columbia, by some greedy teachers..do we really need this again?learn some history… a now gibson finally gets it…. ZERO HISTORY….well took him long enough…. .. and mcluhan hasnt changed.. only those who can find his words online do:)